When a videogame enters the Quality Assurance (QA) phase of its development, the team must make a delicate decision: on which mobile devices will the game be tested. In theory, the ideal scenario would be to test it in every single cell phone model out there. But given the fast pace of cell phone models production each year, including software updates, hardware improvements, modifications happening every month, the ideal scenario is unrealistic, to say the least, even for the largest mobile game publishers. On top of that, cell phone models become obsolete at some point several years later. At the time of writing, Google Play Store shows more than 21,169 different registered android devices. No wonder why the decision of which cell phone models to test a videogame on is so sensible and important just before jumping into QA. Unfortunately, there is no one single clear criteria to guide this decision. So, producers general tend to adopt one of the following strategies:

Newest flagship phones

Image from gagadget.com

This strategy targets the “best phones” of well-known brands, like Samsung Galaxy S22 Ultra, Google Pixel 6 Pro, Oppo Find X5 Pro, Xiaomi 12, etc.

This selection may ensure that the game runs smoothing on the best phones in the market. Even if this course of action is good from a marketing perspective, it does not fully serve the purpose of QA, which is to find issues and bugs users could sooner or later encounter when playing the game. Moreover, the number of people who own the latest models is very small compared to the overall market. But it’s also true that people purchasing the newest phones spend more time searching for new apps to install than users with older phones.

Best seller phones

Image from verizon.com

A different strategy focuses on the most successful phones in terms of sales. However, this approach has many nuances that need to be taken into account in order to implement it optimally. The following variables need to be considered:

- Cell phone models vary in success depending on countries and regions, as well as the different agreements between manufacturers and mobile carriers in each country.

- Divergence of cell phone model use is also found between age groups, which leads to a divergence in the kind of apps & games that are installed on those models.

- Cell phone models are not topping the sales charts indefinitely. Their success and popularity varies from month to month, and even more from year to year.

To complicate matters, this data is not easily accessible online.

Most used phones

Image from actualidadgadget.com

Similar to the previous strategy, but different in its focus, developers can choose the most used phones based on statistics from the app stores for a certain time period, a data more easily accessible if the videogame publisher has previously published other games. Google also shares partial information on this regard (these metrics are not thorough, they’re only a partial picture). Also, several concerns must be taken into consideration:

- Whether the new launch is targeted at the same group of users as the previous one or not.

- Whether the previous game is still being downloaded and installed, because if it’s not, then it’s pertinent to ask if it’s due to a massive change in the users’ cell phone model.

- Finally, what models are more popular in the target market countries.

Device selection is not an easy task, and none of those strategies are perfect, but TagWizz has developed an approach that tackles all of the above in order to have the most comprehensive QA tests possible.

Device Specifications

When selecting devices by the above strategies, we are missing a very important aspect: while phones are all different between themselves, they share similarities.

Image from mobiforge.com

This is where smart device inventories come into play. A good QA outsourcing provider should define their devices inventory strategically, by purchasing phones with different CPUs, GPUs, screen aspect ratios, and RAM. A common mistake is to test a game on a phone with huge resolution and great processing speed, assuming that it will automatically run smoother than on a lower tier phone, when in fact some games run smoother on those slower phones because they have less pixels to process!

Image from elrincondechina.com

But more importantly, comprehensive QA testing includes plenty of phones in which issues and bugs have more chance of popping up, and that happens more frequently on cheaper phones.

Firmwares

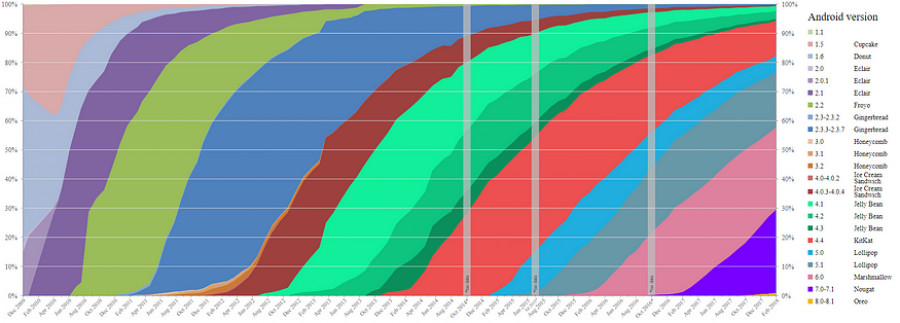

Firmware versions are extremely important as well: both Google and Apple release new versions (and sub-versions) of their operating systems on a frequent basis. Then users either upgrade to the latest version or not. In the case of Android phones, it is quite common that they do not, and not because users don’t want to, but because there are so many devices released in short periods of time that it is impossible for manufacturers and carriers to parallely update the firmware of all their devices.

Image from wikipedia.com

It is unrealistic to try to calculate the amount of software updates generated by this trend. On top of that, software updates may have sub-sub versions for each mobile carrier in the world (one for Vodafone, one for Orange, one for AT&T, one for Verizon, one for Telcel, etc.). Everybody uses very different Android versions on their phones. This inevitably leads to bugs! Because there may be compatibility issues between firmware versions. A game developer may use a cool feature of the one version, but that may not work on previous versions. Or there may be a third party component which does not work well on older firmware versions. A comprehensive QA testing needs to aim at covering as many of these possibilities as it can.

Conclusion

As a rule of thumb, the best device selection is a combination of all of the above: some flagship phones, some best seller phones, some cheap phones and some most used phones. Finally, filling the gaps in terms of device specifications so that the selection covers a wide range of CPUs, GPUs, memory, aspect ratios, etc. And don’t forget, firmwares!

This article was written by Adrian Gimate-Welsh with the help of TagWizz’s group of experts.